Smart Musical Instruments (SMIs) are digital instruments with key traits such as connectivity, leveraging sensors and embedded intelligence in order to create new interactions between musicians, their instruments and audiences. Mappings are what defines the connection between an instruments inputs and outputs. Literature shows that complex mappings allow musicians to have high levels of control over their instrument, and that mappings also have a significant effect on an audience’s perception of the instrument. Thus, creating complex mappings is a necessary next step when building effective SMIs. In this work, we present a set of lightweight bindings between FAUST and libmapper, two popular tools in the digital musical instruments space. We demonstrate how these bindings enable users to quickly create complex mappings between their own inputs and arbitrary FAUST synthesizers at run-time, allowing researchers and musicians to focus on the creation of mappings that best suit their individual requirements.

Matthew Peachey and Joseph Malloch. FAUSTMapper: Facilitating Complex Mappings for Smart Musical Instruments. In Proceedings of the International Symposium on the Internet of Sounds (IS2). Pisa, Italy, 2023.

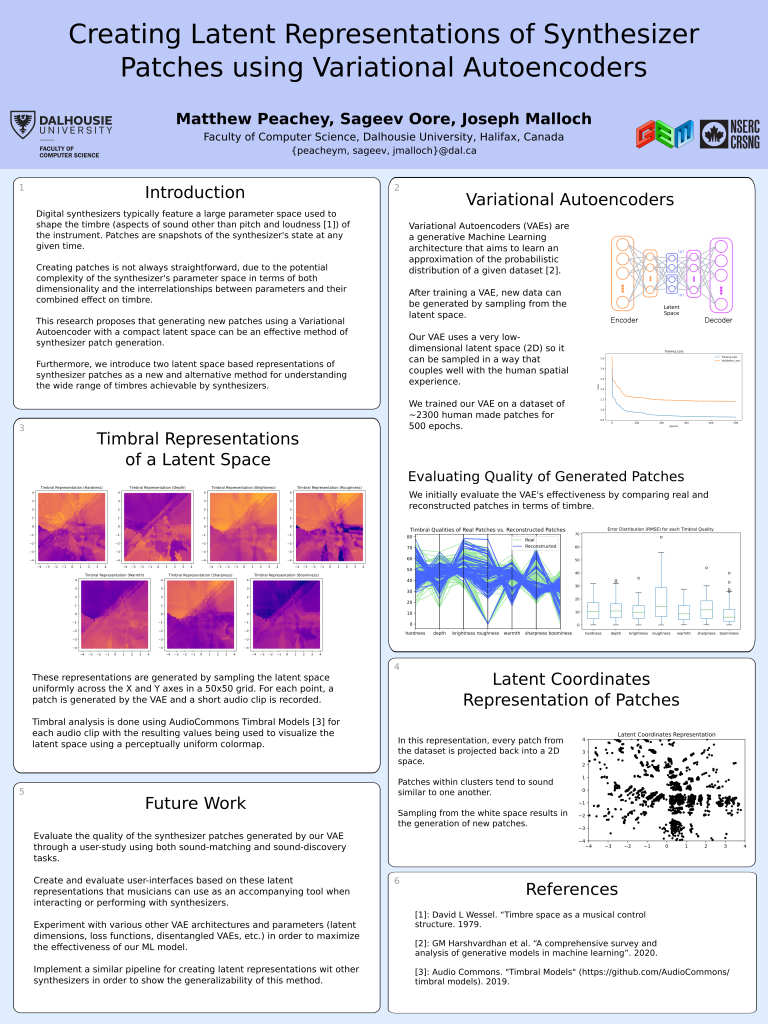

Creating Latent Representations of Synthesizer Patches using Variational Autoencoders

Digital synthesizers typically feature a user-adjustable parameter space (i.e. the set of user-adjustable parameters) that is used to shape the sound (or timbre) of the instrument. A synthesizer patch is a snapshot of the state of the instrument’s parameter space at a given time and is the representation most familiar to synthesizer users. Creating patches can often be repetitive, tedious, and complicated for synthesizers with large parameter spaces. This paper presents the creation and use of latent representations of synthesizer patches generated by training a Variational Autoencoder (VAE) on a library of existing patches. We demonstrate how to generate previously unseen patches by exploring this latent representation via interpolation through the latent space. Using the open-source synthesizer amSynth as a test bed, we evaluate reconstructed patches against a ground truth both, numerically and timbrally, as well as show how generating new patches from the latent space result in diverse yet musically pleasing timbres.

Matthew Peachey, Sageev Oore, and Joseph Malloch. Creating Latent Representations of Synthesizer Patches using Variational Autoencoders. In: Proceedings of the 4th International Symposium on the Internet of Sounds (IS2), Pisa, Italy, 2023.

Mapping Tools Virtual Workshop 2022

On 1 September 2022 we held the third annual workshop on the mapping tools project (libmapper and surrounding tools). This year we had presentations and discussions covering:

- recent related projects and publications, including ForceHost and Mapper4Live

- updates to libmapper, language bindings, and user interfaces

- integration with FAUST and Ableton Live

- (re)release of the pySignalPlotter utility

- a proposal for adding quaternion support to the libmapper expression engine

- a proposal for a standard MIME type for referring to libmapper objects, enabling drag-and-drop mapping between signals

- a proposal for expression-language extensions for supporting “self-timed” periodic map updates and synchronized transports

- a proposal for adding support for “datasets” to libmapper

Video documentation of the workshop will be posted at a later date.

Mapper4Live

Using Control Structures to Embed Complex Mapping Tools into Ableton Live

This paper presents Mapper4Live, a software plugin made for the popular digital audio workstation software Ableton Live. Mapper4Live exposes Ableton’s synthesis and effect parameters on the distributed libmapper signal mapping network, providing new opportunities for interaction between software and hardware synths, audio effects, and controllers. The plugin’s uses and relevance in research, music production and musical performance settings are explored, detailing the development journey and ideas for future work on the project.

Boettcher, B., Malloch, J., Wang, J., & Wanderley, M. M. (2021). Mapper4Live: Using Control Structures to Embed Complex Mapping Tools into Ableton Live. NIME 2022. https://doi.org/10.21428/92fbeb44.625fbdbf